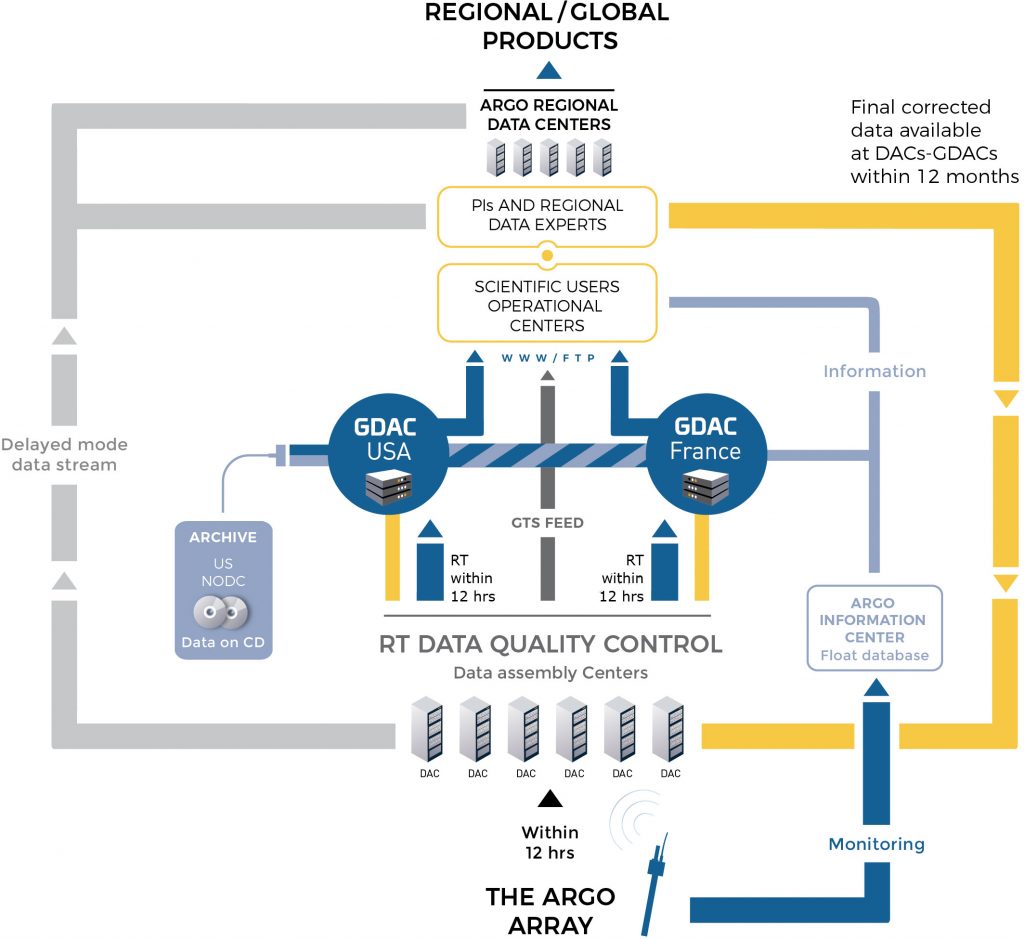

When a float surfaces, the data are transmitted and the float’s position is determined, usually by GPS now, but sometimes still by Système Argos. The float data are sent to national data centers (DACs) and float positions are monitored by OceanOPS in France. At the DACs, the data are subjected to initial scrutiny using an agreed upon set of real time quality control tests (see the ADMT Documentation page for a description of the tests) where erroneous data are flagged and/or corrected and the data are passed to Argo’s two Global Data Assembly Centers (GDACs) in Brest, France and Monterey, California. The GDACs are the first stage at which the freely available data can be obtained via the internet. The GDACs synchronize their data holdings to ensure consistent data is available on both sites. The data reach operational ocean and climate forecast/analysis centers via the Global Telecommunications System (GTS).

The target is for these “real-time” data to be available within approximately 12 – 24 hours of their transmission from the float.

There are many ways to access Argo data which are documented here. Some of them include the following:

- Argo netCDF files from the GDACs either through FTP, HTTP, rsync or the Argo DOI

- Argo data products including gridded fields, velocity products and other profile collections

- Argo data visualizations

- Model outputs

To help you get started using Argo netCDF profile files, there is a quick start guide.

In addition to the real-time data stream, Argo has the potential, after careful data assessment, to provide salinity/temperature/pressure profiles that approach ship-based data accuracy.

In general there is no possibility of carrying out calibration checks on a float’s sensors after it has left the laboratory or has been launched by a research ship that might make a nearby CTD cast. One means of adjusting the data is to look at deviations of the float data from a stable, deep temperature/salinity climatology (Wong et al, 2003; Böhme and Send, 2005; Owens and Wong, 2009; Cabanes et al, 2016), or to compare profiles from floats that coincide in space and time. The OWC method (Owens and Wong, 2009; Cabanes et al, 2016) has been adopted by Argo as its standard means of delayed mode data quality control. The delayed-mode quality control is the responsibility of researchers in each country in collaboration with the appropriate national data center. It has been recommended that delayed mode data inspection is carried out on a 1 year long record so that sudden jumps in calibration may be distinguished from long term drift or water mass property changes. This imposes a minimum 6 month delay on the availability of delayed mode data.

This system was adopted in 2004 and is now being applied to Argo data. These delayed-mode data are currently available from the GDACs. To learn more about the data management of Argo and how to use the Argo data effectively, visit the Argo Data Management Website.

An additional phase of Argo data management occurs at a regional level at the Argo Regional Centers (ARCs). This enables the accumulation of consistent regional data sets and the production of Argo based products. To learn more about the Argo Regional Centers, go to the ARC page.

OceanOPS is a source of information about the development and performance of the global array and the national programs that contribute to it.

The final repository for Argo data is with the US National Centers for Environmental Information (NCEI).